We tend to take our sense of touch for granted in everyday settings, but it is vital for our ability to interact with our surroundings.

Imagine reaching into the fridge to grab an egg for breakfast.

As your fingers touch its shell, you can tell the egg is cold, that its shell is smooth, and how firmly you need to grip it to avoid crushing it.

These are abilities that robots, even those directly controlled by humans, can struggle with.

A new artificial skin developed at Caltech can now give robots the ability to sense temperature, pressure, and even toxic chemicals through a simple touch.

This new skin technology is part of a robotic platform that integrates the artificial skin with a robotic arm and sensors that attach to human skin.

A machine-learning system that interfaces the two allows the human user to control the robot with their own movements while receiving feedback through their own skin.

The multimodal robotic-sensing platform, dubbed M-Bot, was developed in the lab of Wei Gao, Caltech’s assistant professor of medical engineering, investigator with Heritage Medical Research Institute, and Ronald and JoAnne Willens Scholar.

It aims to give humans more precise control over robots while also protecting the humans from potential hazards.

“Modern robots are playing a more and more important role in security, farming, and manufacturing,” Gao says. “Can we give these robots a sense of touch and a sense of temperature? Can we also make them sense chemicals like explosives and nerve agents or biohazards like infectious bacteria and viruses? We’re working on this.”

The skin

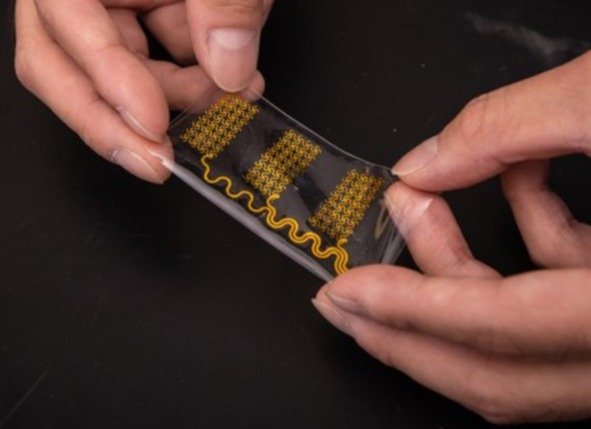

A side-by-side comparison of a human hand and a robotic hand reveals glaring differences. Whereas human fingers are soft, squishy, and fleshy, robotic fingers tend be hard, metallic, plasticky, or rubbery. The printable skin developed in Gao’s lab is a gelatinous hydrogel and makes robot fingertips a lot more like our own.

Embedded within that hydrogel are the sensors that give the artificial skin its ability to detect the world around it. These sensors are literally printed onto the skin in the same way that an inkjet printer applies text to a sheet of paper.

“Inkjet printing has this cartridge that ejects droplets, and those droplets are an ink solution, but they could be a solution that we develop instead of regular ink,” Gao says. “We’ve developed a variety of inks of nanomaterials for ourselves.”

After printing a scaffolding of silver nanoparticle wires, the researchers can then print layers of micrometer-scale sensors that can be designed to detect a variety of things. The fact that the sensors are printed makes it quicker and easier for the lab to design and try out new kinds of sensors.

“When we want to detect one given compound, we make sure the sensor has a high electrochemical response to that compound,” Gao says. “Graphene impregnated with platinum

detects the explosive TNT very quickly and selectively. For a virus, we are printing carbon nanotubes, which have very high surface area, and attaching antibodies for the virus to them. This is all mass producible and scalable.”

An interactive system

Gao’s team has coupled this skin to an interactive system that allows a human user to control the robot through their own muscle movements while also receiving feedback to the user’s own skin from the skin of the robot.

This part of the system makes use of additional printed parts—in this case, electrodes fastened to the human operator’s forearm.

The electrodes are similar to those that are used to measure brain waves, but they are instead positioned to sense the electrical signals generated by the operator’s muscles as they move their hand and wrist.

A simple flick of the human wrist tells the robotic arm to move up or down, and a clenching or splaying of the human fingers prompts a similar action by the robotic hand.

“We used machine learning to convert those signals into gestures for robotic control,” Gao says. “We trained the model on six different gestures.”

The system also provides feedback to the human skin in the form of a very mild electrical stimulation. Bringing back the example of picking up an egg, if the operator were to grip the egg too tightly with the robotic hand and was in danger of crushing its shell, the system would alert the operator through what Gao describes as “a little tingle” to the operator’s skin.

Gao hopes the system will find applications in everything from agriculture to security to environmental protection, allowing the operators of robots to “feel” how much pesticide is being applied to a field of crops, whether a suspicious backpack left in an airport has traces of explosives on it, or the location of a pollution source in a river. First though, he wants to make some improvements.

“I think we have shown a proof of concept,” he says. “But we want to improve the stability of this robotic skin to make it last longer. By optimizing new inks and new materials, we hope this can be used for different kinds of targeted detections. We want to put it on more powerful robots and make them smarter, more intelligent.”

The paper describing the research, titled “All-printed soft human-machine interface for robotic physicochemical sensing,” appears in the June 1 issue of Science Robotics. Co-authors are medical engineering graduate students Jiahong Li, Samuel A. Solomon, Jihong Min, Changhao Xu, and Jiaobing Tu; postdoctoral scholar research associate in medical engineering Yu Song; former postdoctoral scholar research associate You Yu; and visiting student Wei Guo.

Funding for the research was provided by the National Institutes of Health, the Office of Naval Research, NASA’s Translational Research Institute for Space Health, the Tobacco-Related Disease Research Program, and the Carver Mead New Adventures Fund at Caltech.

Written by Emily Velasco.